Consent Isn’t Just an Ethical Safeguard - It’s a Data Quality Strategy.

20,000 People Said Yes. So Why Are Companies Afraid to Ask?

There’s a story the tech industry tells itself about consent. “If you ask people for explicit permission to use their data, they’ll say no. So you have to be creative about how you get it. Bury it in terms. Use broad language. Make the product so useful that people click “I agree” without thinking about what they’re agreeing to.”

I know this because an investor told me to do exactly that. Build a product that collects voice data for free. Train my own models. That’s the moat.

I didn’t take that advice. Instead, I asked.

With Destined AI, we asked people directly: will you contribute your voice data to help improve AI? No burying it in terms of service. No vague “new features” clauses. A clear ask with a clear purpose.

Over 20,000 people said yes.

So the excuse doesn’t hold up. People will consent; when you’re honest about what you’re building and why. The problem was never that people don’t want to participate. The problem is that companies don’t want to be accountable for what they do with the participation.

What Happens When You Skip Consent

Here’s the part nobody talks about. Skipping consent doesn’t just create a legal problem or an ethical problem. It creates a product problem.

Being from the South, most voice AI systems simply don’t understand our voices. It’s a familiar frustration; whether it’s personal assistants misunderstanding everyday instructions or more serious implications in healthcare, like ambient scribes missing critical details.

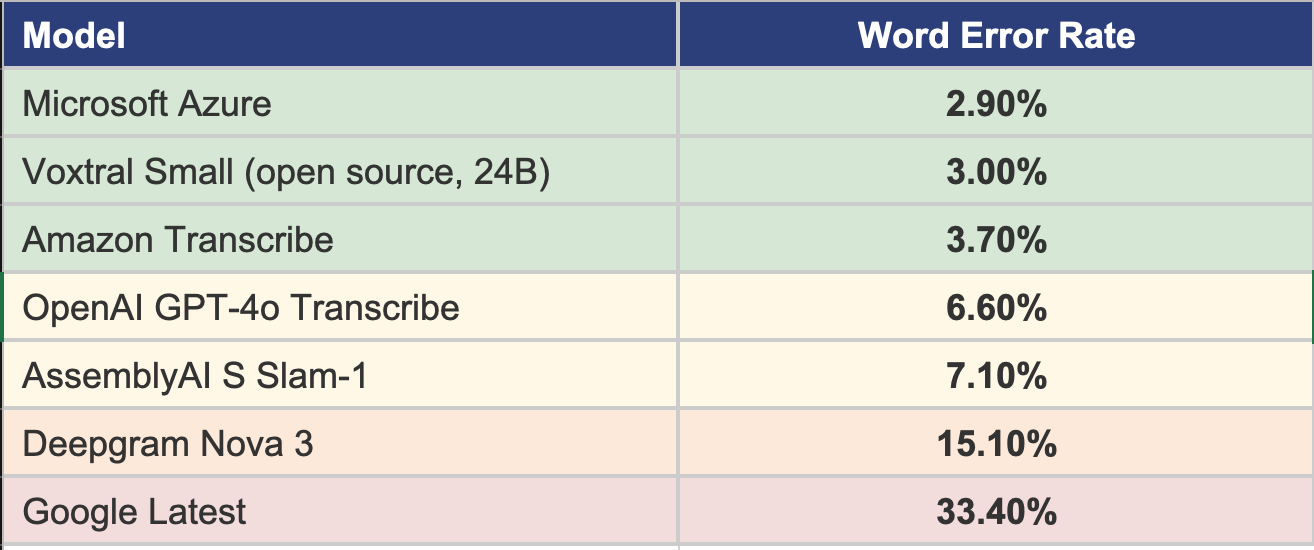

I decided to personally test every major speech-to-text platform so others don’t have to navigate the same trial and error. I ran a subset of data through eight of the top models; OpenAI, AssemblyAI, Deepgram, Amazon Transcribe, Google Speech, Azure Speech, and the open-source Voxtral model.

The results:

Word error rates ranged from 2.9% to 33.4%. Most of them failed badly on women from New Orleans. Not edge cases. Not unusual accents. Just people talking the way they actually talk.

That’s what happens when you build AI on data that was scraped, not given. You end up with models trained on the voices that were easiest to collect, not the voices that need to be heard. The data is biased because the collection was biased. And the collection was biased because nobody asked.

Consent isn’t just an ethical safeguard. It’s a data quality strategy.

Why Companies Really Skip Consent

So if people will say yes when you ask honestly, why do companies skip consent?

From the hundreds of people I’ve spoken with, there are three main reasons.

First, they don’t ask so you don’t get the chance to say no. If you never ask the question, you never get a no. You just take. And then you argue later that the terms covered it. That’s exactly what happened with Adobe; the agreement said “distribute to end users” and “promote your work.” They used it for AI training. When I challenged it, they argued the language was broad enough to cover it. They never asked because asking creates a record. Not asking keeps things vague.

Second, they think you will say no. And honestly, some people would. But as we proved with 20,000 contributors, most people are willing to participate when you’re transparent about what you’re doing and why. The industry assumption that people will refuse is based on the industry’s own behavior; people don’t trust you because you’ve given them reasons not to. Fix the trust, and the consent follows.

Third, they think you’ll ask for money. And that eats into their profits. If you ask a creator for explicit permission to use their work for AI training, the creator might say “sure, but what’s the compensation?” And suddenly the “free” data isn’t free anymore. The whole business model of scraping and training depends on the data being costless. Consent introduces a price. And companies don’t want to pay it.

It’s never been about what’s possible. It’s about what’s profitable.

The Idea Graveyard

One of my biggest fears is ending up in what I call the idea graveyard. It’s a place where dreams were never pursued. Passions were never followed. Ideas that could have changed things just… stayed ideas.

I think about this a lot when it comes to what we’re building. The easy path would have been to take the investor’s advice. Collect data quietly. Build models fast. Move on. That’s the path most companies take because it’s faster and cheaper and nobody asks questions until it’s too late.

But the version of Destined AI that cuts corners on consent? That idea deserves to stay in the graveyard. The version that empowers community; that asks people, that gets explicit permission, that builds technology good enough to actually understand a woman from New Orleans and rural southerners; that’s the one worth building. Even if it’s harder. Even if it’s slower.

Consent Is the Foundation

The promise of AI means nothing if it fails to work reliably in real-world scenarios. 20,000 people proved that explicit consent works. They said yes because we asked honestly. Imagine what’s possible if more companies did the same.

More reliable AI. More breakthroughs. More advancements.

Companies don’t skip consent because people won’t agree. They skip it so you can’t say no, because they think you’ll say no, or because they think you’ll ask for money. But building AI that doesn’t work for the people it should serve? That’s more expensive than any of it.